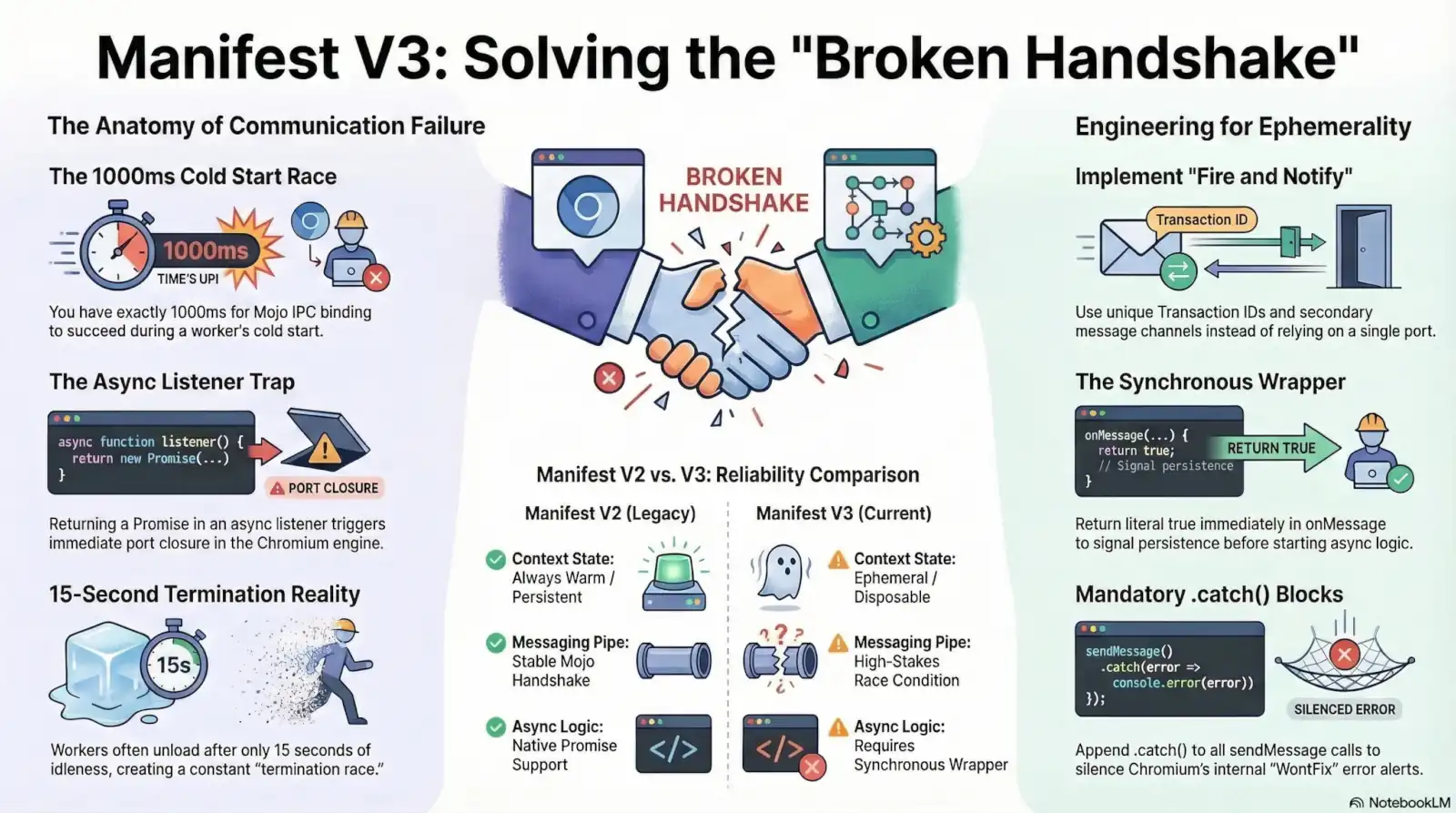

You added return true. You followed the documentation to the letter. The console still reports “The message port closed before a response was received”. This error is the most ubiquitous symptom of the transition from Manifest V2’s persistent background pages to the ephemeral Service Worker environment of Manifest V3. In the old model, the background context was always warm and ready. In Manifest V3, every communication is a high-stakes race against a clock you cannot see.

Contemporary developer discourse often reduces this failure to a simple syntax error. Forensic investigation proves it is far more clinical. The breakdown occurs deep within the Chromium internals, involving low-level race conditions and Mojo IPC (Inter-Process Communication) synchronization lapses.

The error is specifically triggered when the MessagePortHost in the browser process detects a disconnection from the receiving renderer before a valid response is signaled or transmitted. This “Broken Handshake” is not a mere coding oversight; it is a structural byproduct of how Chromium manages port lifecycles and V8 engine execution constraints. Reliability in this environment must be engineered, not assumed.

The Theory/Reality Gap: Why Manifest V3 Communication Fails

The stability of Manifest V2 relied on a persistent, “Always Warm” background page. It functioned as a reliable anchor for the extension’s logic. Manifest V3 terminates that reliability. The architectural transition from the persistent background page model of Manifest V2 to the ephemeral Service Worker execution environment in Manifest V3 has introduced systemic communication failures. This is a fundamental shift in how Chromium handles extension lifecycles.

Contemporary developer discourse often reduces this failure to the omission of a return true statement. This is a reductive diagnostic. The “The message port closed before a response was received” error is not merely a coding oversight; it is a structural byproduct of the transition to ephemerality. When a message is sent in MV3, the browser does not just pass data. It initiates a Mojo IPC request to revive a potentially stopped worker.

A multi-process communication system used in Chromium to pass messages between the renderer process (where your script lives) and the browser process (the orchestrator). In MV3, this Mojo pipe is the high-level abstraction for every message port.

Chromium now mandates a delicate orchestration of process revival and message dispatching. If the browser process attempts to deliver a message via the Mojo pipe but the Service Worker fails to respond within the expected lifecycle window, the port collapses. This is the Broken Handshake. The receiver hangs up before the sender even begins to speak. You are no longer communicating with a persistent entity. You are attempting to wake a sleeping process and transmit data simultaneously.

This dual-requirement creates a race condition where the infrastructure itself is the bottleneck. The shift from persistence to ephemerality necessitates a move toward defensive engineering. The risk is no longer just logic errors, but the systemic latency inherent in the revival process itself.

Ugly Truth 1: The 1000ms Cold Start Initialization Race

Your local development environment is a lie. The extension that passes every smoke test on a high-end workstation fails instantly on a user’s throttled laptop or an underpowered Chromebook. This discrepancy is not a mystery; it is a direct consequence of environment-dependent latency. When a message is dispatched to an idle Service Worker, Chromium initiates a “cold start.” You have exactly 1000ms for the Mojo IPC binding to succeed.

If your script execution exceeds this millisecond window, the ExtensionHost aborts the attempt. The port closes before your code even reaches the listener registration line. This is the first stage of the Broken Handshake. Large top-level dependencies—such as heavy cryptographic libraries or complex framework initializations—are the primary culprits. They inflate the “Time to Listener” (TTL) beyond the browser’s patience.

Every line of code outside your onMessage listener—imports, constant initializations, and library setups—must execute before Chromium can bind the message port. If this “cold start” takes >1000ms, the port closes before your listener even exists.

The official documentation suggests a 30-second idle timer. Forensic evidence suggests otherwise. If a Service Worker fails to register its onMessage listener within a specific initialization window, the browser-side ExtensionHost may fail to deliver the message, leading to a port closure error. Measurements in real-world conditions show that workers are often unloaded after as little as 15 seconds of idleness, creating a ‘termination race’ where a message arriving during the unloading process is met with a closed port because the MessagePortHost cannot bind to a context that is being torn down.

This 15-second reality means your worker is constantly cycling between active and terminated states. Any message arriving during the teardown phase hits a dead end. The MessagePortHost cannot attach to a process that is currently being purged from memory. You are fighting a structural race condition where the platform prioritizes resource reclamation over communication integrity.

Ugly Truth 2: V8 Microtask Queue and the Async Listener Trap

You thought async/await would make your code cleaner. Instead, it killed your communication pipe. The very syntax designed to simplify asynchronous flow is the primary architect of the Broken Handshake in Manifest V3. Developers naturally reach for async listeners to handle database lookups or network requests, assuming the environment respects modern JavaScript patterns. It does not.

The failure is rooted in a fundamental collision between modern language features and a legacy C++ dispatcher. When an async function is used as an onMessage listener, it implicitly returns a Promise. The Chromium dispatcher, however, checks for a synchronous return value of literal true. Because the dispatcher does not await the listener’s return, it interprets the Promise object as a ‘falsy’ signal for port persistence, triggering an immediate MessagePortHost closure before the V8 microtask queue can even process the first await statement.

In JavaScript, an async function always returns a Promise. Even if you return true at the very end of that function, the Chromium engine sees a Promise object during the synchronous execution of the listener. Since the engine is looking for the primitive true, the check fails instantly.

By the time your code hits its first await, the browser process has already decided you have nothing more to say. It marks the port as eligible for garbage collection and severs the link. Placing return true inside an async block is effectively invisible; the engine has moved on before the V8 engine can even schedule your microtask. You are being penalized for using standard, readable code in a runtime that demands synchronous primitives to manage asynchronous lifecycles.

Ugly Truth 3: The Chromium “WontFix” and Internal Promisification

The error in your console is not always a sign of broken code. Often, it is a deliberate noise floor maintained by the Chromium project. The “The message port closed before a response was received” error is often a false positive generated by the system’s internal promisification of the sendMessage API. In the transition to Manifest V3, the browser converted the once-callback-based messaging system into a Promise-based architecture. This shift introduced an aggressive, internal watchdog mechanism.

When you send a message without expecting a response—a “fire-and-forget” notification—the internal promise remains pending. If the receiver terminates or the port closes, the system interprets this as a failure of communication rather than a successful one-way transmission. The “WontFix” (or “Working as Intended”) aspect arises from the fact that Chromium developers consider this behavior helpful for debugging, as it identifies cases where a response might have been expected but not sent. This stance, documented under Issue 40826436, prioritizes internal engine visibility over developer ergonomics.

Even if you don’t care about a response, Manifest V3’s internal architecture does. Because sendMessage now returns a Promise by default, the engine attaches an internal listener that screams “failure” if that promise isn’t explicitly resolved—even for one-way notifications.

The browser process treats every sendMessage call as a request for acknowledgment. If the MessagePortHost does not receive a signal before the context evaporates, it logs a violation. To Chromium engineers, a silent failure is an analytical blind spot; they prefer a loud false positive. You are forced to navigate a console filled with “errors” that are, by design, non-negotiable by-products of the platform’s internal plumbing. The system demands a handshake even when you have nothing more to say.

The Forensic Mitigation: Implementing the “Fire and Notify” Pattern

Stop attempting to hold the door open. In the non-deterministic environment of Manifest V3, the “Broken Handshake” is inevitable if you rely on a single, long-lived connection. To stabilize production code, you must move from a single-channel dependency to a decoupled, “double-knock” system.

The immediate tactical response is the synchronous wrapper. Do not make your onMessage listener async. Instead, use a standard function that returns literal true immediately, then invoke your internal asynchronous logic. This ensures the MessagePortHost receives the persistence signal before the V8 engine clears the current execution context and reaps the port.

For complex operations, tactical fixes are insufficient. To bypass the fragile one-way sendMessage Promise and the V8 microtask race, developers must implement a ‘Fire and Notify’ architecture. This involves the sender dispatching a unique ‘Transaction ID’ and the receiver responding via a secondary, independent message channel once the task is complete, rather than relying on the caller’s internal listener to stay alive during long-running async operations. This architectural shift acknowledges that the initial port is ephemeral. You fire the request, assign a UUID (Transaction ID), and wait for a completely new sendMessage call from the Service Worker to report the result.

Because Chromium internally promisifies sendMessage, you must always append a .catch(() => { /* ignore */ }) to your calls. This isn’t just “good practice”—it is the only way to silence the engine’s internal ‘WontFix’ alerts when a message is successfully delivered but the port is reaped.

Defensive engineering requires accepting the “WontFix” reality. Even a perfectly executed “Fire and Notify” sequence can trigger a port closure error if the Service Worker unloads a millisecond too early. Wrap every chrome.runtime.sendMessage invocation in a try/catch block or a trailing .catch(). This suppresses the false-positive telemetry that otherwise pollutes your error logs. You are not fixing a bug; you are filtering out the noise of the Chromium runtime’s aggressive resource reclamation. Handle the race by treating the messaging pipe as lossy by design.

The Final Verdict: Engineering for Ephemerality

Manifest V3 is not a bug; it is a resource management strategy. The persistent background page was a luxury the Chromium engine no longer affords. The “Broken Handshake” is the inevitable result of a platform that prioritizes memory overhead and battery life over developer ergonomics. You are not “fixing” a message port error; you are adapting to a runtime that views your extension as disposable.

The transition to Manifest V3 represents a paradigm shift where the browser process no longer guarantees the availability of the receiver’s execution context. Modern extension development is no longer about maintaining a stateful background environment; it is about building stateless, event-driven systems that anticipate their own termination. This is the forensic reality. Every communication must be treated as a potential failure. Every listener must assume the worker is already being reaped.

In MV3, your Service Worker is a ghost. It appears, handles a task, and vanishes. Any communication logic that assumes the worker is “standing by” will eventually trigger a port closure error.

The goal is no longer to keep the port open. The goal is to ensure your architecture survives its closure. Stop fighting the Chromium lifecycle. Start engineering for ephemerality.

EDGE CASES & FAQ

async because the dispatcher sees a Promise object instead of a boolean. Related Citations & References

- ISChromium

- ISChromium

- STjavascript – ManifestV3 new promise error: The message port closed before a response was received – Stack Overflow

- GRWhat are the execution time limits for the service worker in Manifest V3?

- GRReliably calling setTimeout in service worker

- ISChromium

- GIConsole error showing up on all origins on Chrome 68 · Issue #339 · igrigorik/videospeed · GitHub

- GIOut of control app calls to token and authorize endpoint ending with error · Issue #1703 · okta/okta-signin-widget · GitHub

- GIChrome: "The message port closed before a response was received." · Issue #130 · mozilla/webextension-polyfill · GitHub

- STjavascript – How to avoid "The message port closed before a response was received" error when using await in the listener – Stack Overflow

- STHow to fix 'Unchecked runtime.lastError: The message port closed before a response was received' chrome issue? on console – Stack Overflow

- CHSource/core/dom/Document.cpp – chromium/blink – Git at Google

- CHDiff – 6da2ebcead..3fe2409358 – chromium/src – Git at Google

- STjavascript – How to handle "Unchecked runtime.lastError: The message port closed before a response was received"? – Stack Overflow

- DTUsing async functions with chrome.runtime.onMessage | dt.in.th

- GISome cosmetic filters are not working in embedded video on Firefox extension · Issue #2103 · AdguardTeam/AdguardBrowserExtension · GitHub

- SUUnchecked runtime.lastError: The message port closed before a response was received. – Google Chrome Community