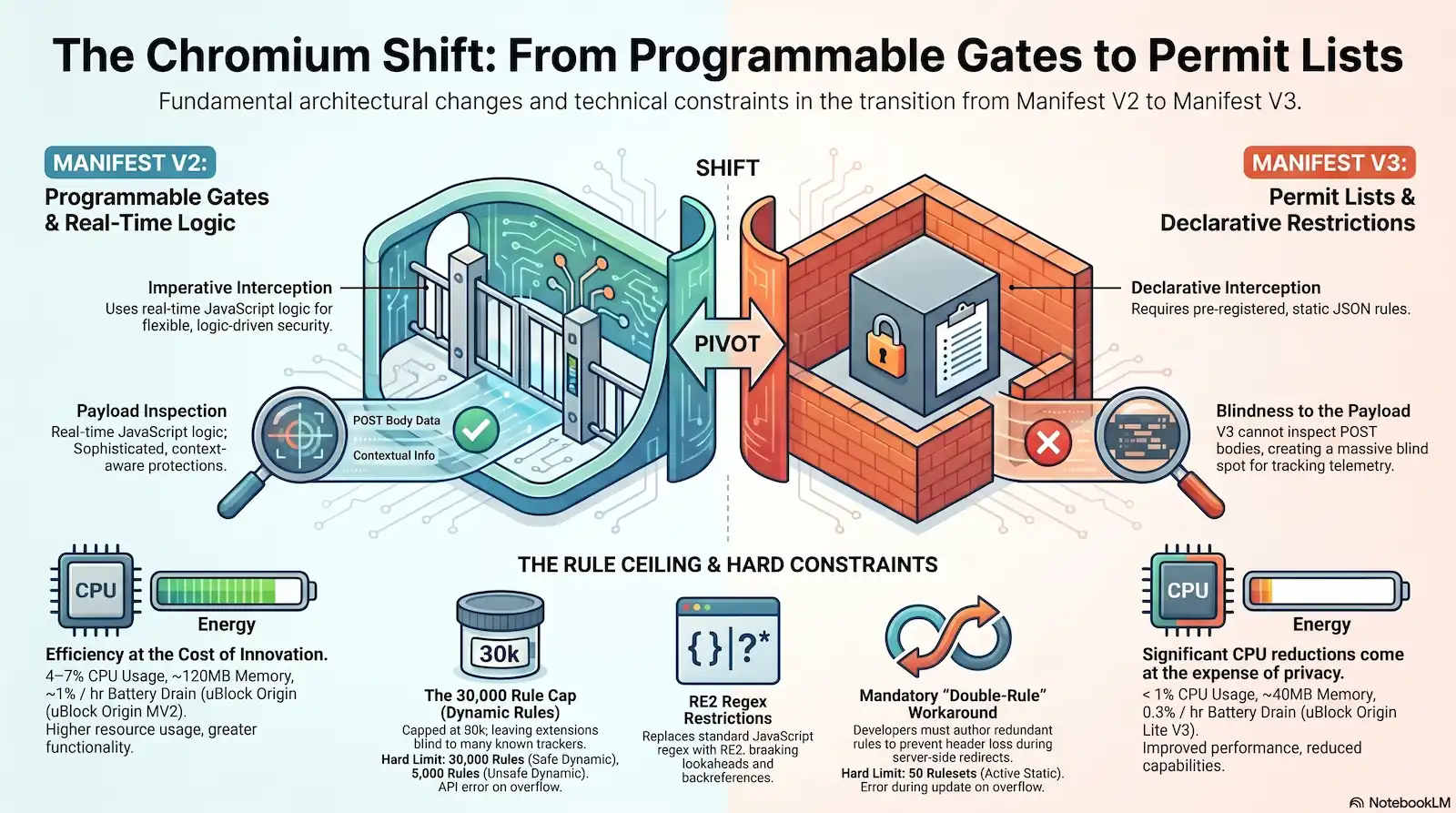

The engineering transition from Manifest V2 to Manifest V3 represents the most disruptive architectural shift in the history of the Chromium project. For over a decade, developers operated under a “Programmable Security Gate” model. In this imperative environment, you utilized the webRequest API to execute arbitrary JavaScript logic on every network request. You were the guard at the gate, inspecting headers, timing, and origins in real-time to make context-aware decisions. That sovereignty is being dismantled.

At the center of this upheaval is the declarativeNetRequest (DNR) API. This mechanism replaces high-privilege interception with a restrictive, declarative model. The official narrative from browser vendors frames this transition as a victory for performance and user privacy. However, a forensic investigation into the Chromium source code and developer post-mortems reveals a landscape defined by hard-coded constraints and undocumented behavioral failures.

The migration forces a shift from “logic-driven” to “data-driven” interception. You no longer “decide” if a request should pass; you “declare” your intentions upfront through JSON-formatted rules. The browser’s network service—not your extension—now handles the matching logic. This moves the decision-making power to a static matching engine that lacks the ability to account for runtime variables or complex state.

Manifest V2 (Imperative) allows real-time code execution to handle requests; Manifest V3 (Declarative) requires pre-registered rule lists that the browser engine evaluates independently of the extension’s background process.

The engineering transition from Manifest V2 to Manifest V3 is the most disruptive architectural shift in the history of the Chromium project. While Google frames this as a victory for performance, a forensic analysis reveals a landscape of hard-coded constraints and undocumented behavioral failures. We are moving from the high-privilege webRequest API to a restrictive, declarative model that fundamentally alters how extensions interact with the network stack.

The technical reality is a transition from an open platform to a deterministic, resource-constrained environment. By removing the “process hop” between the network thread and the extension, the browser gains marginal efficiency at the cost of the most sophisticated privacy protections.

The Rule Ceiling: Managing Deterministic Resource Scarcity

In Manifest V2, your filtering logic was limited only by your imagination and the execution time of your background script. Manifest V3 terminates that sovereignty. You are transitioning from a “Programmable Security Gate” where you wrote the laws in real-time, to a “Pre-Printed Permit List” governed by deterministic resource scarcity. Your extension is no longer an active participant in the network stack; it is a budget-constrained tenant in a shared, finite pool of resources.

The “Ugly Truth” is that the Chromium engine now treats network interception as a commodity to be rationed. Under this new regime, sophisticated privacy tools face immediate resource starvation. A standard EasyList subscription contains approximately 75,000 rules. Under the 30,000-rule ceiling for safe dynamic rules, your extension is effectively blind to more than half of the web’s known tracking and advertising telemetry before you even write a single line of custom code.

The following architectural constraints, extracted from Chromium Gerrit Commit d8fa5c67 and the AdGuard Engineering Blog, define the boundaries of your new environment:

| Rule Category | Constant Definition | Hard Limit Value | Browser Behavior on Overflow |

|---|---|---|---|

| Static Rulesets (Total) | MAX_NUMBER_OF_STATIC_RULESETS | 100 Rulesets | Registration failure during installation. |

| Active Static Rulesets | MAX_NUMBER_OF_ENABLED_STATIC_RULESETS | 50 Rulesets | Error during updateEnabledRulesets. |

| Safe Dynamic Rules | MAX_NUMBER_OF_DYNAMIC_RULES | 30,000 Rules | API error: no rules added. |

| Unsafe Dynamic Rules | MAX_NUMBER_OF_UNSAFE_DYNAMIC_RULES | 5,000 Rules | API error: no rules added. |

The distinction between “Safe” and “Unsafe” rules is clinical. Safe rules focus on basic blocking and redirection. “Unsafe” rules—those that modify request or response headers—are capped at a negligible 5,000 entries. This is a forensic signature of the browser vendor’s intent: limit the extension’s ability to manipulate the metadata of the web.

Do not expect the browser to truncate your list to fit the limit.

The behavior when these limits are hit is uniform across Chromium-based browsers: the API call fails, and the rule set remains unchanged. In the Chromium source file declarative_net_request.idl, the documentation explicitly states that if the MAX_NUMBER_OF_DYNAMIC_RULES is exceeded, an error message is generated, and no changes are made to the rule set.

DNR updates are strictly atomic. If your update logic attempts to push the total count to 30,001, the entire transaction is rejected. The browser reverts to the previous state, leaving your user unprotected against the new threats you intended to block. You are now forced to implement rigorous rule-counting logic and manual garbage collection within your extension. You are no longer just a developer; you are an accountant managing a dwindling resource budget to avoid silent UX failures.

The Condition Paradox: Lost Logic in Declarative Matching

You are now blind to the payload. For a senior developer accustomed to the total visibility of the webRequest API, the realization that you can no longer inspect the contents of a POST request is the primary human friction point of this migration. In the old “Programmable Security Gate” model, you could pause a request, parse the body, and block it if it contained sensitive user telemetry. In the new world of the “Pre-Printed Permit List,” you are forbidden from looking inside the trunk.

This is the Condition Paradox. declarativeNetRequest (DNR) operates purely on the metadata of a request—URLs, domains, and resource types. There is no mechanism in the current DNR specification for an extension to “declare” a rule that blocks a request if the POST body contains a specific string or follows a specific schema. This creates a massive blind spot in the browser’s defense architecture. Tracking companies are not blind to this limitation; they can simply move their exfiltration logic from URL parameters to the request body to bypass DNR-based filtering entirely.

Any tracking data exfiltrated via the request body (POST/PUT methods) is now invisible to the DNR matching engine. Extensions cannot block or redirect based on the presence of PII or tracking tokens within these payloads.

The loss of logic extends to the Timing Paradox. In Manifest V2, your blocking logic was synchronous and context-aware. You knew the state of the browser the moment a request was fired. Manifest V3 introduces a “race condition” where the first few requests to a malicious site might bypass the extension because the declarative ruleset had not yet been updated or indexed by the browser’s network service. An extension cannot make a decision based on the state of the browser at the exact millisecond a request is made. You are relying on a static snapshot of intent to fight a dynamic, millisecond-fast web.

Causality itself is fractured by the Temporal Trap. Under the declarative model, the sequence of network events is immutable. A rule cannot use a response header condition to modify a request header—the request headers have already been sent to the server by the time the response headers are available for matching. This architectural rigidity makes legacy features like “strip headers only if the server returns a specific status code” technically impossible. You are forced to choose between over-blocking or allowing potential leaks, as the browser no longer permits the “if-then” logic that defined sophisticated extension behavior for a decade.

Regex Constraints: The RE2 Feature-Parity Failure

You have likely already encountered the validation error: “Invalid regex.” In the transition to Manifest V3, the browser vendors replaced the flexible, standard JavaScript regex engine with RE2. This was not a choice made for your benefit. It was a choice made to guarantee linear execution time at the cost of your filtering precision. The RE2 engine isn’t an upgrade; it’s a sandbox with the walls moved inward.

The forensic reality of RE2 is a feature-parity failure that renders sophisticated patterns useless. If your MV2 extension relied on complex matching to identify obfuscated trackers, those rules are now dead.

| Feature | Support in MV2 (JS) | Support in MV3 (DNR) | Impact on Filtering |

|---|---|---|---|

| Negative Lookaheads | Supported | Unsupported | Impossible to target “anything except X”. |

| Backreferences | Supported | Unsupported | Cannot match repeated randomized strings. |

| Possessive Quantifiers | Supported | Unsupported | Reduced efficiency in matching long patterns. |

| Maximum Count | Unlimited | 1,000 per extension | Forces reliance on simpler, less precise patterns. |

The following JavaScript regex features are explicitly broken or unsupported by the RE2 engine in the DNR context:

- Possessive Quantifiers (e.g.,

.*+) - Negative Lookaheads (e.g.,

(?!...)) - Positive Lookaheads (e.g.,

(?=...)) - Backreferences (e.g.,

\1)

The 1,000-rule computational cap on regex rules per extension is a hard limit designed to protect the browser’s network thread from high-complexity matching. This is the ultimate “Pre-Printed Permit List” constraint. When you hit this ceiling, the API does not offer a fallback. You are forced to decompose precise, single-regex rules into multiple, less efficient glob patterns or risk the entire ruleset failing to register. This loss of expressive power directly benefits the trackers you are attempting to block. Sophisticated scripts now hide in the syntax gaps where RE2 cannot reach.

Solving the Redirect Header-Drop: A Critical Silent Failure

Your custom headers are disappearing. You have verified your ruleset. You have double-checked the debugger. Yet, your user reports a broken login or a tracker bypass because a mandatory authentication token or security flag simply vanished mid-transit. This is the Redirect Header-Drop, a critical silent failure that exposes the functional gap between imperative persistence and declarative transience.

In the legacy “Programmable Security Gate” of Manifest V2, you held the request object in a synchronous lock. Because you operated within the webRequest lifecycle, you could guarantee header persistence across a redirect chain. You were the authority throughout the entire flight of the request. Manifest V3 replaces this with a “Pre-Printed Permit List” that is fundamentally stateless.

Under the webRequest API, developers could ensure header persistence across redirects because they held the request object in a synchronous lock. In the declarativeNetRequest implementation, specifically concerning ‘modifyHeaders’ rules, there is a documented ‘silent drop’ occurring during server-side redirects (301/302). When a request is redirected, the headers injected by the initial DNR rule are not automatically carried over to the new request URL unless a secondary, explicit rule matches the redirect destination. This is tracked as a critical architectural oversight in W3C WebExtensions Issue #694

The matching engine evaluates each hop in a redirect chain as an isolated event. If your rule matches example.com but the server issues a 302 to api.example.com, your injected headers are dead on arrival at the destination. The browser does not “remember” the modification from the first leg of the journey.

To solve this, you must adopt a “Double-Rule” workaround. This strategy forces you to anticipate the redirect destination and author a redundant rule to catch the headers on the bounce. This doubles your rule consumption for a single logical operation, further straining your finite resource budget.

[

{

"id": 1,

"priority": 1,

"action": {

"type": "modifyHeaders",

"requestHeaders": [

{

"header": "X-Auth-Token",

"operation": "set",

"value": "forensic-id-001"

}

]

},

"condition": {

"urlFilter": "example.com",

"resourceTypes": ["main_frame"]

}

},

{

"id": 2,

"priority": 1,

"action": {

"type": "modifyHeaders",

"requestHeaders": [

{

"header": "X-Auth-Token",

"operation": "set",

"value": "forensic-id-001"

}

]

},

"condition": {

"urlFilter": "api.example.com",

"resourceTypes": ["xmlhttprequest"]

}

}

]This architectural burden shifts the responsibility of state management from the browser’s network stack to your JSON manifest. You are no longer writing logic; you are manually mapping every potential movement of a request to prevent the browser from “forgetting” your instructions.

Performance vs. Latency: Debunking the V3 Mandate

The official narrative claims the webRequest API was a performance bottleneck. Google frames the move to declarativeNetRequest (DNR) as a necessity to preserve browser responsiveness and battery life. This is an unverified mandate. A forensic analysis of real-world usage suggests the “performance crisis” of Manifest V2 was largely a convenient fiction used to justify the destruction of the “Programmable Security Gate.”

Data from a study by WhoTracks.me confirms that the overhead of synchronous webRequest blocking in leading ad-blockers was never a significant issue for users. The logic is simple: the milliseconds spent executing JavaScript to intercept a request are a rounding error compared to the hundreds of milliseconds saved by preventing the loading of heavyweight trackers and ad scripts. You aren’t “optimizing” the browser by removing the filter; you are just changing who controls the delay.

2024 benchmarks on MacBook Air M2 hardware show that while DNR extensions like uBlock Origin Lite have zero JavaScript execution at request time, they still increase idle battery drain by up to 0.3% per hour. While this is an improvement over the 4–7% CPU usage seen in legacy, logic-heavy filters, the “Information Gain” is that the benefit is an incremental optimization, not a seismic leap.

The transition trades one form of latency for another. We call this the “Abstraction Cost.” As rulesets approach the 330,000 rule cap, the browser’s network service must perform matching against a massive JSON document for every single request. While the matching engine is implemented in C++, the sheer computational volume of evaluating these declarative rules creates its own bottleneck. You have replaced a flexible, high-speed gatekeeper with a bloated, deterministic permit list.

| Metric | uBlock Origin (MV2) | uBlock Origin Lite (DNR) | Performance Impact |

|---|---|---|---|

| Page Load Time | Baseline | -1.2% | Negligible Improvement |

| CPU Usage (Active) | 4–7% | < 1% | Significant Reduction |

| Memory Footprint | ~120MB | ~40MB | High Efficiency |

| Idle Battery Drain | ~1% / hr | 0.3% / hr | Incremental Gain |

Faster does not mean better if the protection is hollowed out. The browser vendors have prioritized a clinical “performance victory” over the sophisticated, context-aware filtering that defined the previous decade of web security. The technical reality is that efficiency came at the cost of innovation.

The Final Verdict: Architectural Conformity or Technical Decay?

The move to declarativeNetRequest is not an evolution; it is a forced migration into a pre-defined enclosure. The “Programmable Security Gate” has been dismantled. You are no longer permitted to run sovereign logic against the network stack. Instead, you are tasked with maintaining a “Pre-Printed Permit List” that must adhere to the browser’s internal constraints. This represents a fundamental pivot from a logic-first engineering mindset to a data-first compliance model.

The forced alignment of extension capabilities with the browser’s internal network service constraints, regardless of the security or privacy cost.

The pivot to declarativeNetRequest is less a performance optimization and more an architectural disarmament. We have traded the sovereignty of imperative logic for the deterministic safety of a static ruleset. While Chromium maintains that this protects user privacy by moving interception out of the extension process, it simultaneously creates a “Permit List” reality. If a tracker isn’t on the list—or the list is full—the tracker wins by default. This is the era of Architectural Conformity.

The “Ugly Truth” is that technical decay is built into the architecture. In the old regime, you wrote code that thought; now, you write data that fits. Engineering excellence in Manifest V3 is no longer measured by the elegance of your JavaScript listeners, but by the efficiency of your hybrid ruleset architecture. You must now balance a massive, static foundation for baseline stability with a lean, dynamic layer for volatile, real-time threats.

{

"name": "Hybrid Filtering Strategy Template",

"version": "1.0.0",

"manifest_version": 3,

"declarative_net_request": {

"rule_resources": [

{

"id": "base_rules",

"enabled": true,

"path": "rules/static_base_filter.json"

},

{

"id": "user_overrides",

"enabled": true,

"path": "rules/static_user_config.json"

}

]

},

"permissions": ["declarativeNetRequest", "declarativeNetRequestFeedback"]

}By capping the expressive power of extensions, the browser has lowered the ceiling on what is possible. You are no longer a sovereign interceptor; you are a budget manager working within the limits of a C++ constant. The engineering challenge ahead is finding the functional gaps in this rigid framework to ensure privacy remains a functional reality rather than a declarative suggestion.

FAQs

Related Citations & References

- DEImproving content filtering in Manifest V3 | Blog | Chrome for Developers

- GIInconsistency: DNR `modifyHeaders` supported headers · Issue #372 · w3c/webextensions · GitHub

- CHextensions/common/api/declarative_net_request.idl – chromium/src – Git at Google

- GIApplying some of the regional lists produces error · Issue #628 · uBlockOrigin/uBOL-home · GitHub

- CPMozilla Solves the Manifest V3 Puzzle To Save Ad Blockers From Chromapocalypse – CPO Magazine

- ADThe first prototype of AdGuard MV3 extension is here

- BYChrome Manifest V3 Deadline: Ad Blocker Impact 2025 | byteiota

- GHManifest V3: The Ghostery Perspective | Ghostery

- GIWhat to do about Manifest V3 · Issue #666 · ipfs/ipfs-companion · GitHub

- REReddit – Please wait for verification

- GHChrome's Manifest V3 – Improving Privacy? | Ghostery

- LIBest Content Filtering Software: Evidence-Based Selection Criteria

- NEAdFlush | Hacker News

- SERfp2363 Lee.Pdf

- GI[DNR] in contrast to `webRequestBlocking`, `declarativeNetRequest` rules are not applied if the request is redirected · Issue #694 · w3c/webextensions · GitHub

- GIInconsistency: declarativeNetRequest rules able to block background requests from other extensions · Issue #369 · w3c/webextensions · GitHub

- GIProposal: declarativeNetRequest: matching based on response headers · Issue #460 · w3c/webextensions · GitHub